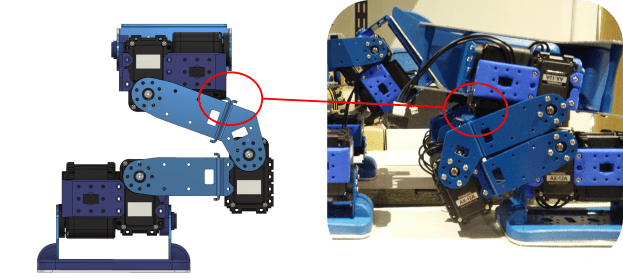

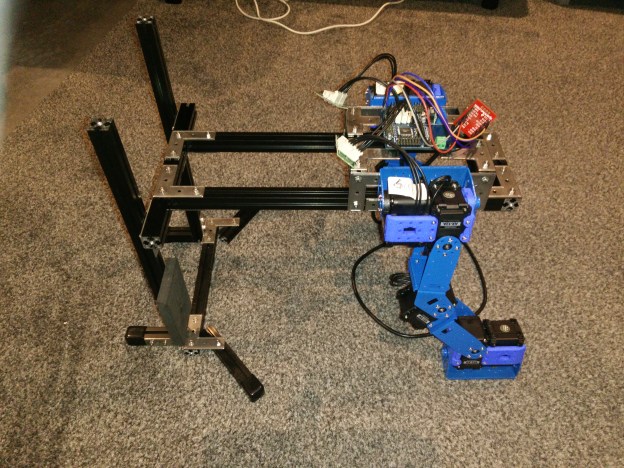

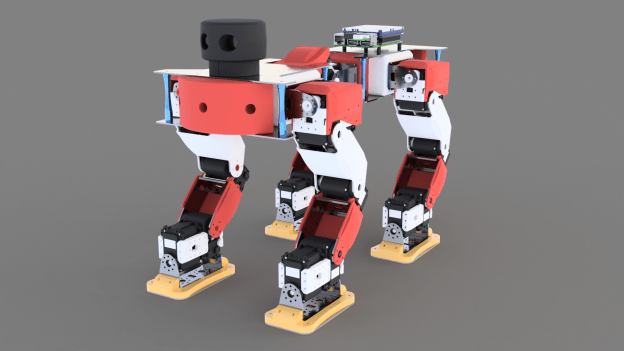

The hardware for the test rig has arrived, and the first leg has been set up!

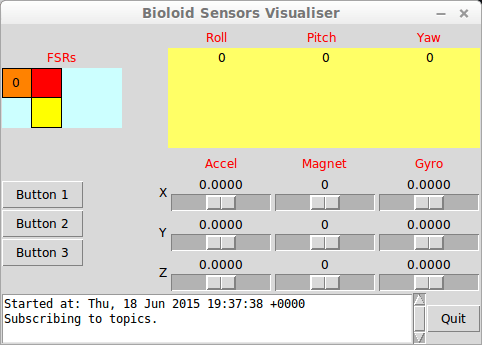

The metal brackets are from Trossen Robotics and largely match the dimensions of their equivalent plastic parts from Robotis. Some parts do not have metal counterparts, so I used spare plastic parts from my Bioloid kit. I also ordered an ArbotiX-M controller (Arduino-based), which communicates with the PC via a spare SparkFun FT232RL I had. The test rig frame is made out of MakerBeam XL aluminium profiles.

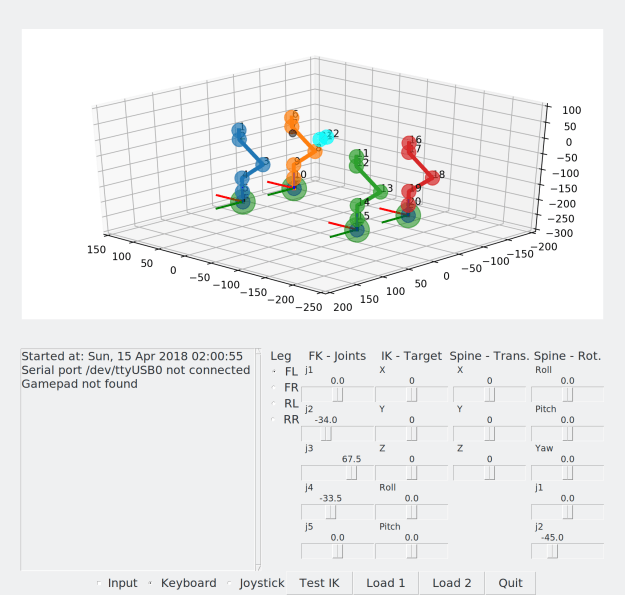

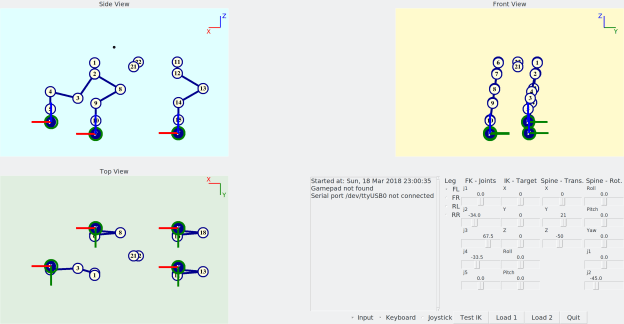

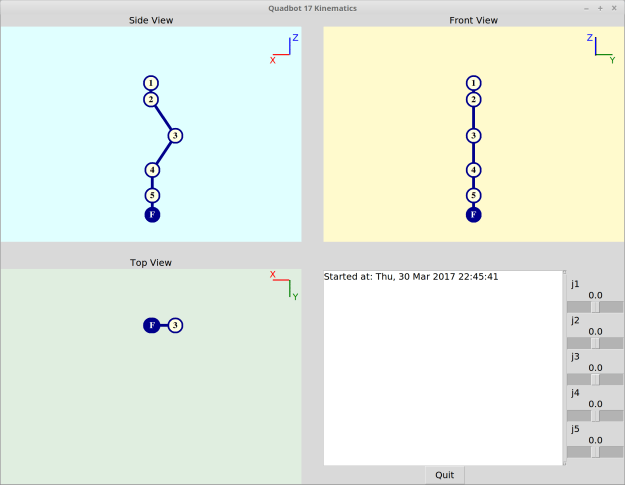

Unfortunately, I thought I had more 3-pin cables than I actually did, so I can’t control all the motors just yet. However, I’ve got an Xbox One controller controlling the IK’s foot target position and a simple serial program on the ArbotiX-M which receives the resulting position commands for each motor.

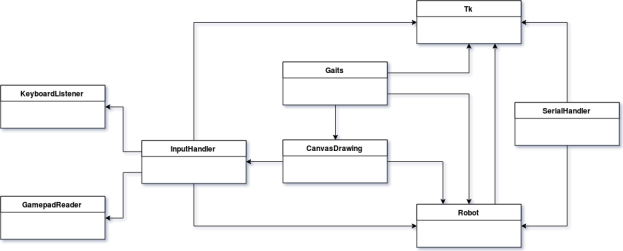

The code for both the Python applet and the Arduino can be found on GitHub here.

Some of the more useful snippets of code are shown below:

The following code handles gamepad inputs and converts them to a natural-feeling movement, based on equations of motion. The gamepad input gets converted into a virtual force, which is opposed by a drag proportional to the current velocity. The angles resulting from the IK are sent to the controller via serial port:

class GamepadHandler(threading.Thread):

def __init__(self, master):

self.master = master

# Threading vars

threading.Thread.__init__(self)

self.daemon = True # OK for main to exit even if instance is still running

self.paused = False

self.triggerPolling = True

self.cond = threading.Condition()

# Input vars

devices = DeviceManager()

self.target = targetHome[:]

self.speed = [0, 0, 0]

self.inputLJSX = 0

self.inputLJSY = 0

self.inputRJSX = 0

self.inputRJSY = 0

self.inputLJSXNormed = 0

self.inputLJSYNormed = 0

self.inputRJSXNormed = 0

self.inputRJSYNormed = 0

self.dt = 0.005

def run(self):

while 1:

with self.cond:

if self.paused:

self.cond.wait() # Block until notified

self.triggerPolling = True

else:

if self.triggerPolling:

self.pollInputs()

self.pollIK()

self.pollSerial()

self.triggerPolling = False

# Get joystick input

try:

events = get_gamepad()

for event in events:

self.processEvent(event)

except:

pass

def pause(self):

with self.cond:

self.paused = True

def resume(self):

with self.cond:

self.paused = False

self.cond.notify() # Unblock self if waiting

def processEvent(self, event):

#print(event.ev_type, event.code, event.state)

if event.code == 'ABS_X':

self.inputLJSX = event.state

elif event.code == 'ABS_Y':

self.inputLJSY = event.state

elif event.code == 'ABS_RX':

self.inputRJSX = event.state

elif event.code == 'ABS_RY':

self.inputRJSY = event.state

def pollInputs(self):

# World X

self.inputLJSYNormed = self.filterInput(-self.inputLJSY)

self.target[0], self.speed[0] = self.updateMotion(self.inputLJSYNormed, self.target[0], self.speed[0])

# World Y

self.inputLJSXNormed = self.filterInput(-self.inputLJSX)

self.target[1], self.speed[1] = self.updateMotion(self.inputLJSXNormed, self.target[1], self.speed[1])

# World Z

self.inputRJSYNormed = self.filterInput(-self.inputRJSY)

self.target[2], self.speed[2] = self.updateMotion(self.inputRJSYNormed, self.target[2], self.speed[2])

with self.cond:

if not self.paused:

self.master.after(int(self.dt*1000), self.pollInputs)

def pollIK(self):

global target

target = self.target[:]

runIK(target)

with self.cond:

if not self.paused:

self.master.after(int(self.dt*1000), self.pollIK)

def pollSerial(self):

if 'ser' in globals():

global ser

global angles

writeStr = ""

for i in range(len(angles)):

x = int( rescale(angles[i], -180.0, 180.0, 0, 1023) )

writeStr += str(i+1) + "," + str(x)

if i 3277) or (i 3277:

oldMax = 32767

elif i < -3277:

oldMax = 32768

inputNormed = math.copysign(1.0, abs(i)) * rescale(i, 0, oldMax, 0, 1.0)

else:

inputNormed = 0

return inputNormed

def updateMotion(self, i, target, speed):

c1 = 10000.0

c2 = 10.0

mu = 1.0

m = 1.0

u0 = speed

F = c1*i - c2*u0 # Force minus linear drag

a = F/m

t = self.dt

x0 = target

# Equations of motion

u = u0 + a*t

x = x0 + u0*t + 0.5*a*pow(t, 2)

# Update self

target = x

speed = u

return target, speed

def rescale(old, oldMin, oldMax, newMin, newMax):

oldRange = (oldMax - oldMin)

newRange = (newMax - newMin)

return (old - oldMin) * newRange / oldRange + newMin

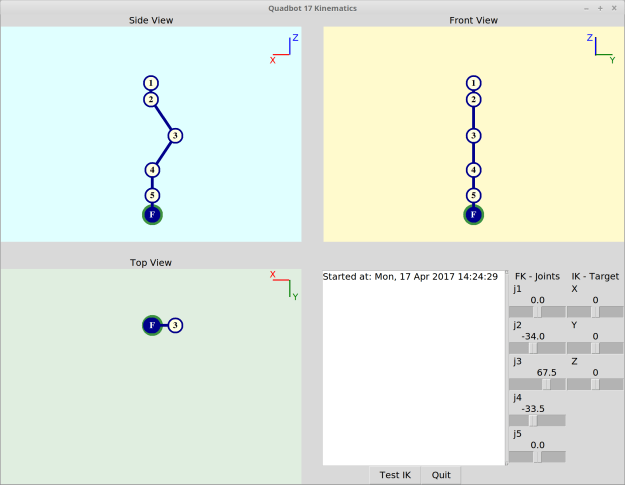

The following Python code is the leg’s Forward Kinematics (click to expand):

def runFK(angles):

global a

global footOffset

global T_1_in_W

global T_2_in_W

global T_3_in_W

global T_4_in_W

global T_5_in_W

global T_F_in_W

a = [0, 0, 29.05, 76.919, 72.96, 45.032] # Link lengths "a-1"

footOffset = 33.596

s = [0, 0, 0, 0, 0, 0]

c = [0, 0, 0, 0, 0, 0]

for i in range(1,6):

s[i] = math.sin( math.radians(angles[i-1]) )

c[i] = math.cos( math.radians(angles[i-1]) )

# +90 around Y

T_0_in_W = np.matrix( [ [ 0, 0, 1, 0],

[ 0, 1, 0, 0],

[ -1, 0, 0, 0],

[ 0, 0, 0, 1] ] )

T_1_in_0 = np.matrix( [ [ c[1], -s[1], 0, a[1]],

[ s[1], c[1], 0, 0],

[ 0, 0, 1, 0],

[ 0, 0, 0, 1] ] )

T_2_in_1 = np.matrix( [ [ c[2], -s[2], 0, a[2]],

[ 0, 0, -1, 0],

[ s[2], c[2], 0, 0],

[ 0, 0, 0, 1] ] )

T_3_in_2 = np.matrix( [ [ c[3], -s[3], 0, a[3]],

[ s[3], c[3], 0, 0],

[ 0, 0, 1, 0],

[ 0, 0, 0, 1] ] )

T_4_in_3 = np.matrix( [ [ c[4], -s[4], 0, a[4]],

[ s[4], c[4], 0, 0],

[ 0, 0, 1, 0],

[ 0, 0, 0, 1] ] )

T_5_in_4 = np.matrix( [ [ c[5], -s[5], 0, a[5]],

[ 0, 0, -1, 0],

[-s[5], -c[5], 1, 0],

[ 0, 0, 0, 1] ] )

T_F_in_5 = np.matrix( [ [ 1, 0, 0, footOffset],

[ 0, 1, 0, 0],

[ 0, 0, 1, 0],

[ 0, 0, 0, 1] ] )

T_1_in_W = T_0_in_W * T_1_in_0

T_2_in_W = T_1_in_W * T_2_in_1

T_3_in_W = T_2_in_W * T_3_in_2

T_4_in_W = T_3_in_W * T_4_in_3

T_5_in_W = T_4_in_W * T_5_in_4

T_F_in_W = T_5_in_W * T_F_in_5

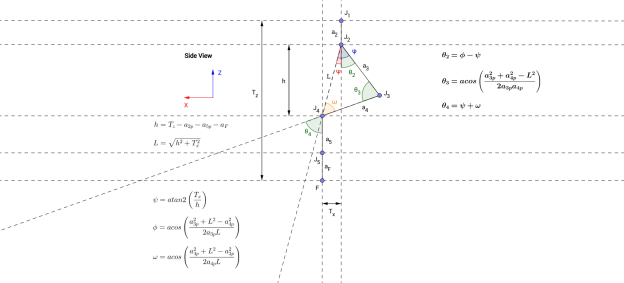

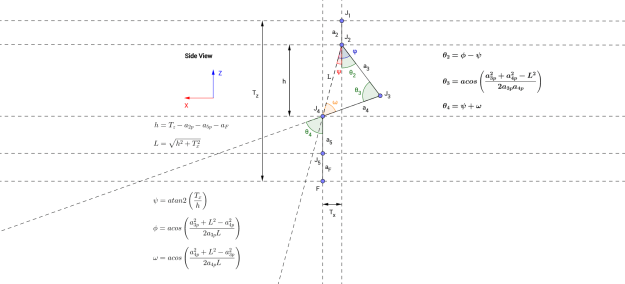

The following Python code is the leg’s Inverse Kinematics (click to expand):

def runIK(target):

# Solve Joint 1

num = target[1]

den = abs(target[2]) - footOffset

a0Rads = math.atan2(num, den)

angles[0] = math.degrees(a0Rads)

# Lengths projected onto z-plane

c0 = math.cos(a0Rads)

a2p = a[2]*c0

a3p = a[3]*c0

a4p = a[4]*c0

a5p = a[5]*c0

j4Height = abs(target[2]) - a2p - a5p - footOffset

j2j4DistSquared = math.pow(j4Height, 2) + math.pow(target[0], 2)

j2j4Dist = math.sqrt(j2j4DistSquared)

# # Solve Joint 2

num = target[0]

den = j4Height

psi = math.degrees( math.atan2(num, den) )

num = pow(a3p, 2) + j2j4DistSquared - pow(a4p, 2)

den = 2.0*a3p*j2j4Dist

if abs(num) <= abs(den):

phi = math.degrees( math.acos(num/den) )

angles[1] = - (phi - psi)

# Solve Joint 3

num = pow(a3p, 2) + pow(a4p, 2) - j2j4DistSquared

den = 2.0*a3p*a4p

if abs(num) <= abs(den):

angles[2] = 180.0 - math.degrees( math.acos(num/den) )

# # Solve Joint 4

num = pow(a4p, 2) + j2j4DistSquared - pow(a3p, 2)

den = 2.0*a4p*j2j4Dist

if abs(num) <= abs(den):

omega = math.degrees( math.acos(num/den) )

angles[3] = - (psi + omega)

# Solve Joint 5

angles[4] = - angles[0]

runFK(angles)

The following Arduino code is the serial read code for the Arbotix-M (click to expand):

#include

int numOfJoints = 5;

void setup()

{

Serial.begin(38400);

}

void loop()

{

}

void serialEvent() {

int id[numOfJoints];

int pos[numOfJoints];

while (Serial.available())

{

for (int i = 0; i < numOfJoints; ++i)

{

id[i] = Serial.parseInt();

pos[i] = Serial.parseInt();

}

if (Serial.read() == '\n')

{

for (int i = 0; i 0) && (0 <= pos[i]) && (pos[i] <= 1023 ) )

SetPosition(id[i], pos[i]);

}

}

}

}