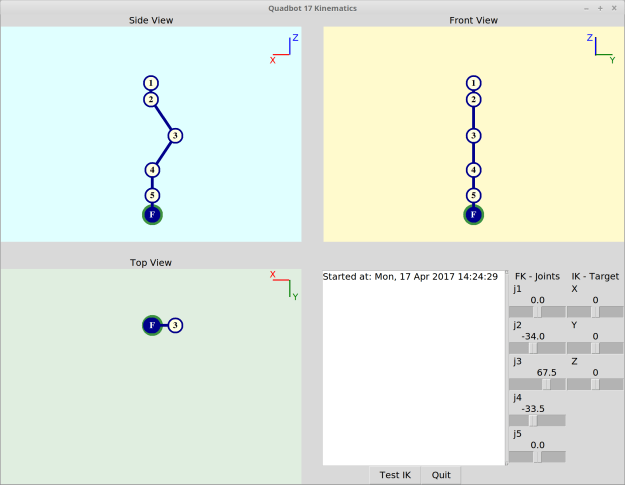

The Python test program has been updated to include the additional spine joints, transformation between the robot and world coordinates, and leg targets which take orientation into account.

This test script is used in anticipation for controlling the actual robot’s servos.

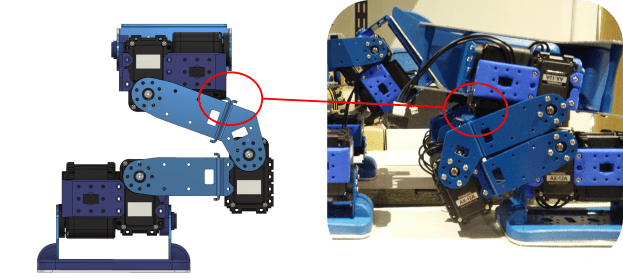

Spine joints

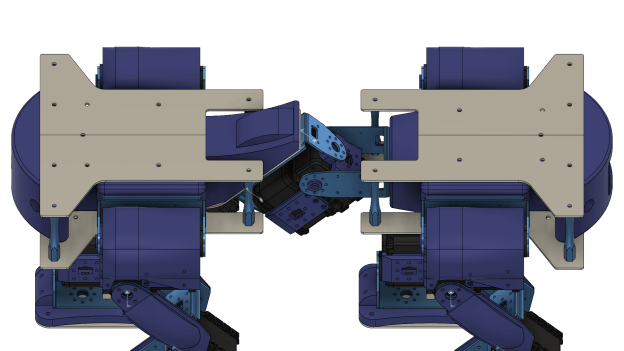

The “spine” consists of two joints which will allow the front of the robot to pitch and yaw independently of the rear. This will give it more flexibility when turning and handling uneven terrain, as well as other tasks such as aiming its sensors at the world.

Since the spine joints are quite simple, I don’t think there is any need to create IK for this section.

The “spine” joints separate the body of the quadruped between mostly similar front and rear sections.

Body and spine orientation

The robot body now takes into account that it has to be oriented w.r.t. the “world”. This will be physically achieved by the information acquired by an IMU sensor. If the robot is tilted forwards, the targets for the legs will have to be adjusted so that the robot maintains its balance.

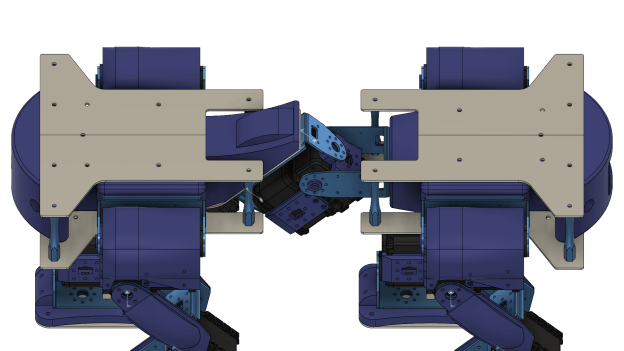

I have defined the kinematics in a way that if the the robot was to rotate w.r.t. the world, the whole body rotates (this can be achieved by moving the test Roll/Pitch/Yaw sliders). However if the servo joints of the spine are moved (test joint 1 / joint 2 sliders) the rear section of the robot will move w.r.t the world, and the rear legs will move with it, while the front section won’t change w.r.t. the world.

In order to achieve this, the leg IK had to be updated so that now the base frames of the front legs are linked to the front section of the robot, and the base frames of the rear legs are linked to the rear section.

You might notice while orientation will be defined by an IMU, pure translation (movement in XYZ) in the world is not considered for now, as it is meaningless without some sort of localisation capability in place. This could be achieved by a sensor (see below), but is an entirely separate challenge for a long way down the line (hint: SLAM).

New leg targets: Foot roll/pitch can be attained (within limits). In addition, the robot base can be positioned with respect to an outside world frame.

Original leg targets: The feet are always pointing vertical to the ground.

Target roll and yaw

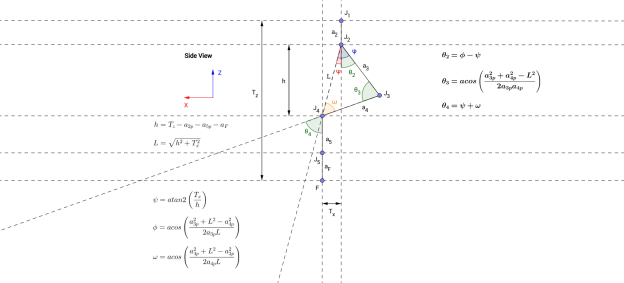

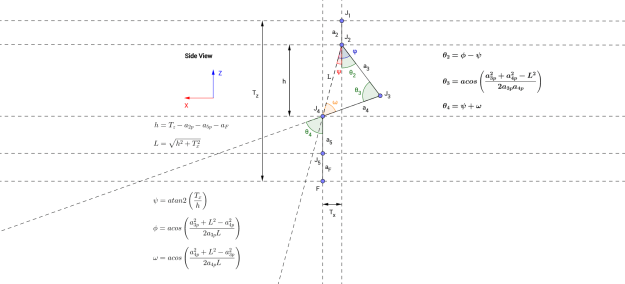

Initially, the leg target was simply a position in 3D for the foot link, and the foot was always pointing perpendicular to the ground, which made the inverse kinematics fairly easy. In version 2, the target orientation is now also taken into account. Actually, the pitch and roll can be targeted, but yawing cannot be obtained, simply because of the mechanics of the legs. Yawing, or turning, can be done by changing the walking gait pattern alone, but the idea is that the spine bend will also aid in steering the robot (how exactly I don’t know yet, but that will come later!).

Getting the kinematics to work were a bit trickier than the original version, mainly because the “pitching” orientation of the leg can only be achieved by the positioning of joint 4, whereas the “rolling” orientation can only be achieved by the positioning of joint 5. The available workspace of the foot is also somewhat limited, in part due to that missing yaw capability. Particularly at positions when the leg has to stretch sideways (laterally) then certain roll/pitching combinations are impossible to reach. Nevertheless, this implementation gives the feet enough freedom to be placed on fairly uneven surfaces, and not be constrained to the previously flat plane.

The next challenge that follows from this, is how can realistic target positions and orientations be generated (beyond predetermined fixed walking gaits), to match what the robot sees of the world?

To answer this, first I need to decide how the robot sees the world: Primarily it will be by means of some 3D scanner, such as the ones I’ve looked into in the past, or maybe the Intel RealSense ZR300 which has recently caught my attention. But this alone might not be sufficient, and some form of contact sensors on the feet may be required.

The big question is, should I get a RealSense sensor for this robot ??? 🙂

Updated code can be found on GitHub (single-file test script is starting to get long, might be time to split up into class files!).