I added some exponential smoothing to the original walking gaits, to smooth the edges of the trajectories and create a more natural movement.

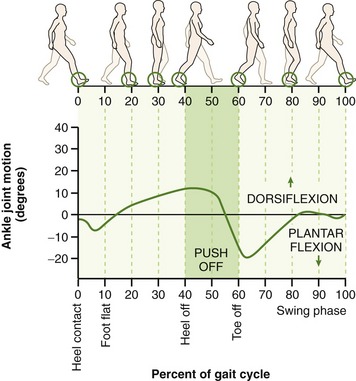

I then added a ±30° pitching motion to what best approximates the ‘ankle’ (4th joint), to emulate the heel off and heel strike phases of the gait cycle.

The range of motion of the right ankle joint. Source: Clinical Gate.

I realised however that applying the pitch to the foot target is not exactly the same as applying the pitch to the ankle joint. This is because, in the robot’s case, the ‘foot target’ is located at the centre of the lowest part of the foot, that comes into contact with the ground, whereas the ankle is higher up, at a distance of two link lengths (in terms of the kinematics, that’s a4+a5). The walking gait thus does not ends up producing the expected result (this is best explained by the animations are the end).

To account for this, I simply had to adjust the forward/backward and up/down position of the target, in response to the required pitch.

With some simple trigonometry, the fwd/back motion is adjusted by , while the up/down motion is adjusted by

, where

is the distance a4+a5 noted previously.

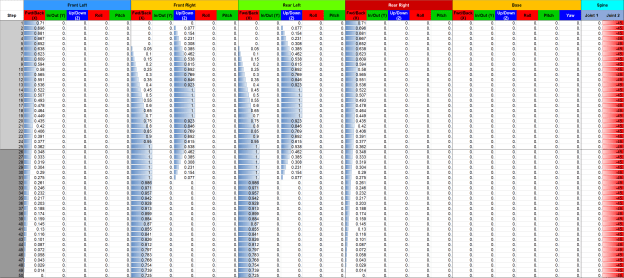

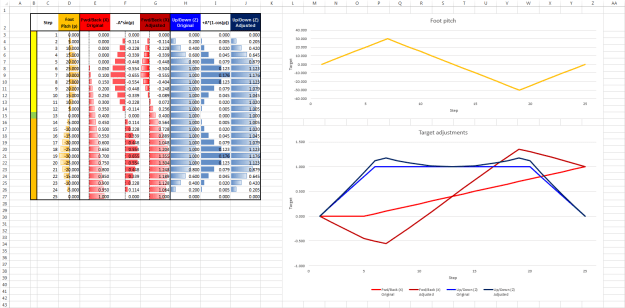

Here are the details showing how the targets are adjusted for one particular phase in the creep walk gait (numbers involving translations are all normalised because of the way the gait code currently works):

Creep walk gait, 25-step window where the foot target pitch oscillates between ±30°. Original target translations (fwd/back, up/down) are shown compared to the ones adjusted in response to the pitch.

The final results of the foot pitching, with and without smoothing, are shown below: